Breaking: Meta Automates Kernel Optimization with AI Agent KernelEvolve

Meta today announced KernelEvolve, an autonomous AI agent that slashes the time needed to optimize low-level hardware kernels from weeks to hours. The system has already delivered over 60% inference throughput improvement for the Andromeda Ads model on NVIDIA GPUs and a 25% training throughput gain on Meta's custom MTIA chips.

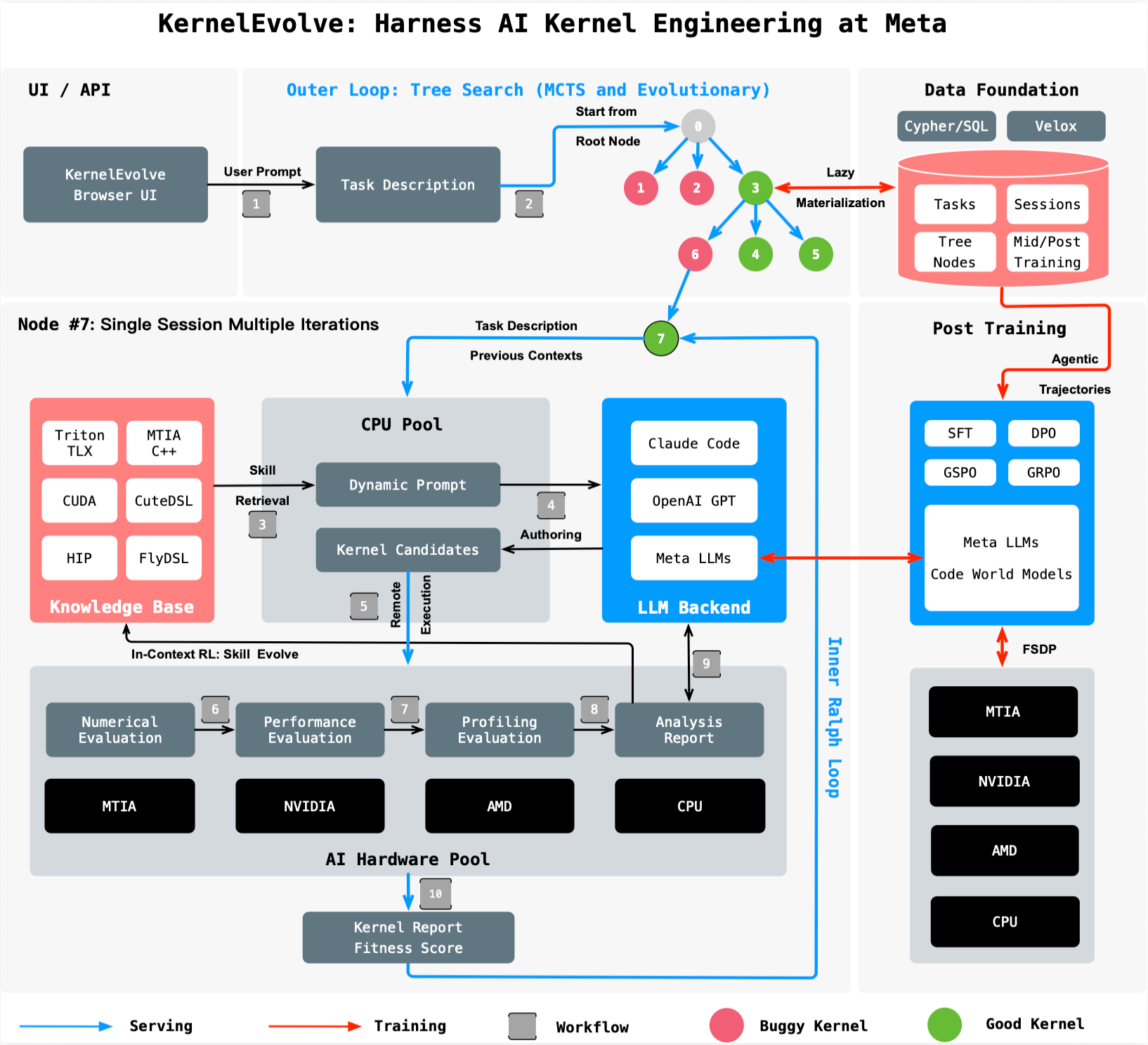

“KernelEvolve treats optimization as a search problem, using an LLM-driven loop to evaluate hundreds of candidate kernels far faster than human experts,” said a Meta spokesperson.

The agent is part of Meta’s broader Ranking Engineer Agent series, designed to autonomously accelerate AI infrastructure. KernelEvolve focuses on the critical task of authoring and tuning kernel code for Meta’s diverse fleet of chips, including NVIDIA and AMD GPUs, plus custom MTIA silicon.

Background: The Kernel Optimization Crisis

Kernels are low-level software that translate high-level AI model operations into instructions specific to each chip. As Meta deploys new chip generations and ML architectures, each requires fresh kernel development.

Vendor libraries cover standard operators like GEMMs and convolutions, but production ranking models demand many custom operators. With the product of model count × hardware types growing exponentially, manual tuning by kernel experts no longer scales.

KernelEvolve was built to address this volume. It uses a purpose-built job harness to evaluate each candidate kernel, feeds diagnostics back to the LLM, and drives a continuous search over hundreds of alternatives. The results exceed human expert performance across both public and proprietary hardware.

What This Means for AI Infrastructure

Faster Development

What once took weeks of profiling, optimizing, and cross-hardware debugging now happens in hours. Engineers are freed to focus on higher-level model innovations.

Better Performance

Beyond the 60% inference gain on Andromeda, KernelEvolve achieved a 25% training throughput improvement on MTIA chips. These gains compound across Meta’s enormous serving fleet.

Broad Applicability

The agent optimizes across NVIDIA GPUs, AMD GPUs, MTIA chips, and CPUs. It generates kernels in high-level DSLs like Triton, Cute DSL, and FlyDSL, as well as low-level languages including CUDA, HIP, and MTIA C++.

“KernelEvolve is generally applicable to a range of AI models beyond Ads Ranking,” the spokesperson added. A paper detailing the system will appear at the 53rd International Symposium on Computer Architecture (ISCA) 2026.

The Ranking Engineer Agent Ecosystem

This is the second post in the Ranking Engineer Agent blog series. The previous post introduced their ML exploration capability, which autonomously designs, executes, and analyzes ranking model experiments. KernelEvolve complements that by optimizing the infrastructure layer.

“Together, these agents form a self-improving loop for ad ranking innovation,” the Meta spokesperson said. “The results speak for themselves.”

With billions of AI-powered experiences served daily, Meta’s investment in autonomous optimization is set to redefine how large-scale AI systems are built and maintained.